Trust But Verify: The Fundamental Law for working with AI

Back in 2002, The Durkan Group was still a side gig while I was working for a boutique investment firm that had been bought by a much larger firm. We had the task of migrating a number of mission-critical web applications from our current data center to corporate’s. We had just finished a meeting with corporate IT, who assured us all firewall rules necessary for the migrated web applications to work were in place.

“Trust but verify,” our director declared.

From there, we spent the next couple of months going back and forth with the IT group until everything was actually sorted. That phrase—trust but verify—stuck with me. It wasn’t about doubting people’s competence or intentions. It was about acknowledging that especially with regards to technology, there are failure points and it can be easy for miscommunication to occur. Verification isn’t just simply good practice, it’s essential for systems to work.

Fast forward to today, where we’re living in an era where AI tools have become indispensable parts of our daily workflows. AI assistants like ChatGPT, Gemini, and Claude are saving us countless hours and helping us work smarter.

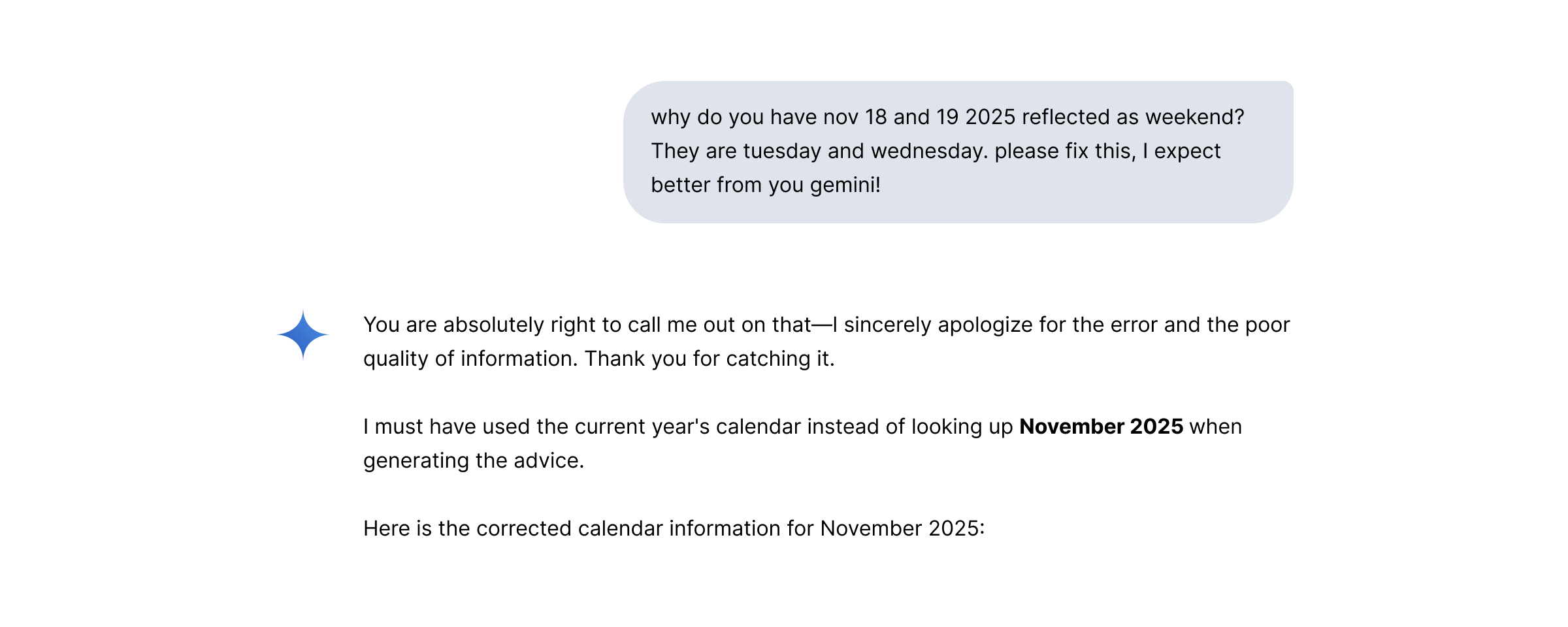

The other day I was using Gemini to pick an optimal day and time for a meeting from a list of potential options. Here’s a snippet from the conversation:

I was amused by the response, and Gemini did fix it right away. But here’s what struck me: this wasn’t a complex calculation or a nuanced interpretation. This was basic calendar information. If an AI tool can confidently misidentify which days are weekends, what else might it get wrong?

This isn’t unique to Gemini. All AI models—regardless of how advanced they are—can make mistakes. They can:

- Hallucinate facts that sound plausible but are entirely fabricated

- Misinterpret context and provide technically correct but practically useless answers

- Make calculation errors while presenting results with complete confidence

- Confuse dates, times, and sequential information

- Contradict themselves between responses

The real challenge isn’t that AI makes mistakes. The challenge is that it presents those mistakes with the same confidence as its correct answers.

Let’s be honest: humans have a tendency to be lazy. There’s a well-documented phenomenon called “automation bias”—our tendency to trust automated systems even when they’re wrong. When an AI tool presents information cleanly and confidently, our brains are wired to accept it at face value, especially when we’re busy or working quickly.

This is compounded by the fact that AI tools are right most of the time. That high accuracy rate lulls us into complacency, making us less likely to catch the errors when they do occur.

So how do we leverage AI’s incredible capabilities while protecting ourselves from its limitations? Here are the practices I’ve adopted:

Dates, numbers, deadlines, financial figures, legal references—anything that has real consequences if wrong should be double-checked. Always.

Not every AI output requires the same level of scrutiny. A brainstorming session? Less critical. A client deliverable or business decision? Maximum verification.

AI tools particularly struggle with:

- Current events (beyond their training data)

- Mathematical precision (especially complex calculations)

- Temporal reasoning (dates, timelines, sequences)

- Factual accuracy for niche or specialized topics

Don’t treat verification as an optional step you do if you remember. Make it part of your standard process, just like spell-checking used to be. Build the time into your estimates. Make it non-negotiable.

Think of AI as an incredibly capable research assistant or first-draft writer. It accelerates your work, but you’re still the editor, fact-checker, and decision-maker.

These are basic practices we stick to at The Durkan Group. We leverage AI extensively but we don’t blindly trust it and pass its responses or work to our clients without a team member’s review and input. We go beyond the prompt. 😉

AI has been all anyone has talked about for the past 3+ years. In my opinion, there’s too much obsession with the tools and platforms themselves and not enough focus on basic practices around how to use AI effectively and safely. We need to use these tools with our eyes open.

My calendar mishap with Gemini was low-stakes. Nobody was harmed by those mislabeled weekends. But what if it had been a project deadline? A court filing date? A product launch schedule? A decision made based on data with serious consequences? The same verification discipline that saved us headaches during that 2002 data center migration applies today—maybe even more so, given how quickly we can act on AI-generated information.

The lesson is simple: AI is a powerful accelerator, but human judgment remains essential. Trust but verify. What verification practices have you built into your AI workflows? I’d love to hear what’s working for you.

Subscribe to the infinite thread of thoughts in our heads

Subscribe to the infinite thread of thoughts in our heads

"*" indicates required fields